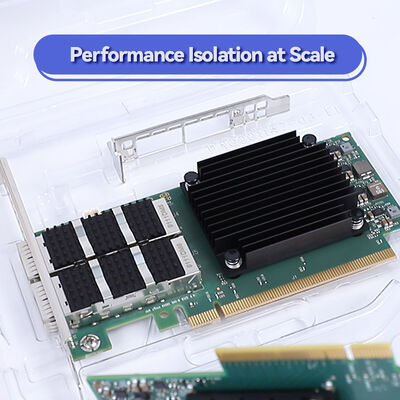

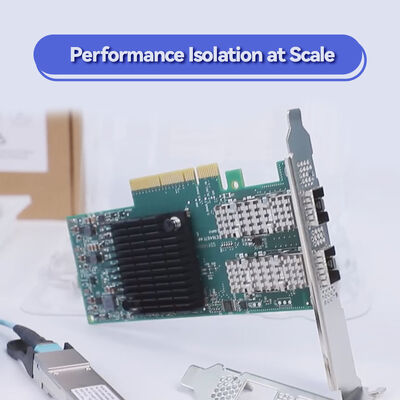

NVIDIA ConnectX-7 NDR 400Gb/s InfiniBand Adapter MCX75310AAS-HEAT | PCIe Gen5 Dual-Port

Product Details:

| Brand Name: | Mellanox |

| Model Number: | MCX75310AAS-HEAT(900-9X766-003N-ST0) |

| Document: | Connectx-7 infiniband.pdf |

Payment & Shipping Terms:

| Minimum Order Quantity: | 1pcs |

|---|---|

| Price: | Negotiate |

| Packaging Details: | outer box |

| Delivery Time: | Based on inventory |

| Payment Terms: | T/T |

| Supply Ability: | Supply by project/batch |

|

Detail Information |

|||

| Model NO.: | MCX75310AAS-HEAT(900-9X766-003N-ST0) | Ports: | Single-Port |

|---|---|---|---|

| Technology: | Infiniband | Interface Type: | Osfp56 |

| Specification: | 16.7cm X 6.9cm | Origin: | India / Israel / China |

| Transmission Rate: | 200gbe | Host Interface: | Gen3 X16 |

Product Description

Ultra-low latency RDMA networking for AI factories, HPC clusters, and hyperscale cloud. Supports InfiniBand NDR (400Gb/s) and Ethernet up to 400GbE. In-Network Computing engines, hardware security acceleration, and GPUDirect® Storage ready.

The NVIDIA ConnectX-7 family redefines data center interconnectivity with up to 400Gb/s bandwidth, hardware-based reliable transport, and advanced in-network computing. The model MCX75310AAS-HEAT belongs to the ConnectX-7 VPI adapter series supporting both InfiniBand (NDR/HDR/EDR) and Ethernet (400GbE to 10GbE). Built for AI, scientific computing, and modern software-defined storage, it delivers sub‑microsecond latency and frees CPU cores via acceleration engines for security, virtualization, and NVMe‑oF. With PCIe Gen5 host interface, this adapter maximizes data movement for GPU clusters and high‑frequency trading environments.

Leveraging ASAP² (Accelerated Switch and Packet Processing) and Zero-Touch RoCE, the adapter simplifies deployment while providing inline hardware encryption – from edge to core. Enterprises can future-proof their infrastructure with NDR speeds and flexible multi‑host support.

Dual-port NDR 400Gb/s InfiniBand or 400GbE, PCIe Gen5 x16 (up to 32 lanes).

SHARPv3 collective operations offload, rendezvous protocol offload, on‑board memory for bursts.

Inline IPsec/TLS/MACsec AES-GCM 128/256-bit, secure boot with hardware root-of-trust, flash encryption.

IEEE 1588v2 PTP (12ns accuracy), SyncE, class C G.8273.2, time-triggered pacing.

GPUDirect RDMA, GPUDirect Storage, NVMe‑oF offload, T10-DIF signature handover.

VXLAN, GENEVE, NVGRE, programmable flexible parser, connection tracking (L4 firewall).

NVIDIA ASAP² (Accelerated Switch and Packet Processing) offloads entire data paths, delivering line‑rate SDN performance with zero CPU overhead. ConnectX‑7 integrates advanced memory virtualization: On‑Demand Paging (ODP), User Mode Registration (UMR), and address translation services (ATS) for efficient containerized workloads. With support for SR-IOV, VirtIO acceleration, and Multi‑Host technology (up to 4 hosts), the adapter enables bare‑metal performance in virtualized environments. The hardware RoCE engine ensures zero‑touch deployment for converged Ethernet fabrics, while the in‑network memory reduces latency for MPI and collective communication libraries (NVIDIA HPC-X, UCX, NCCL).

To address security from edge to core, inline cryptography engines handle IPsec, TLS 1.3, and MACsec without consuming execution cores, making it ideal for multi‑tenant clouds and financial services.

- AI & Large Language Model clusters: RDMA & GPUDirect accelerating distributed training across hundreds of GPUs.

- High‑Performance Computing (HPC): MPI offloads, SHARPv3 for in‑network reduction, adaptive routing.

- Cloud and Edge data centers: Overlay network acceleration, VXLAN offload, SR-IOV.

- Software‑defined storage (SDS): NVMe/TCP, NVMe‑oF, iSER, NFS over RDMA.

- Real‑time analytics & trading: Ultra-low latency + PTP class C synchronization.

| Component / Ecosystem | Supported Options |

|---|---|

| Host Interface | PCIe Gen5 (up to x32 lanes, x16 typical), PCIe bifurcation, Multi-Host (4 hosts) |

| Operating Systems | Linux (RHEL, Ubuntu, in-box drivers), Windows Server, VMware ESXi (SR-IOV), Kubernetes |

| Virtualization & Containers | SR-IOV, VirtIO acceleration, NVMe over TCP offload for containers |

| HPC Middleware / Libraries | OpenMPI, MVAPICH, MPICH, UCX, NCCL, UCC, OpenSHMEM, PGAS |

| Storage Protocols | NVMe‑oF (RDMA/TCP), SRP, iSER, NFS over RDMA, SMB Direct, GPUDirect Storage |

| Parameter | Specification |

|---|---|

| Product Model | MCX75310AAS-HEAT |

| Form Factors | PCIe HHHL / FHHL, OCP 3.0 SFF/TSFF (refer to ordering guide) |

| InfiniBand Speeds | NDR 400Gb/s, HDR 200Gb/s, EDR 100Gb/s |

| Ethernet Speeds | 400GbE, 200GbE, 100GbE, 50GbE, 25GbE, 10GbE (NRZ/PAM4) |

| Network Ports | 1/2/4 port configurations (dependent on SKU; 2-port typical for this model) |

| Host Bus | PCI Express Gen 5.0 (compatible with Gen4/Gen3), up to x32 lanes, TLP processing hints, ATS, PASID |

| Security Offloads | Inline IPsec (AES-GCM 128/256), TLS 1.3, MACsec, secure boot, flash encryption, device attestation |

| RDMA / Transport | Hardware reliable transport, XRC, DCT, On-Demand Paging, UMR, GPUDirect RDMA |

| Timing & Sync | IEEE 1588v2 PTP, G.8273.2 Class C, 12 ns accuracy, SyncE, PPS in/out, time-triggered scheduling |

| Management | NC-SI, MCTP over PCIe/SMBus, PLDM (DSP0248/0267/0218), SPDM, SPI flash, JTAG |

| Remote Boot | InfiniBand remote boot, iSCSI, UEFI, PXE |

| Open Networking | ASAP² for SDN, VXLAN/GENEVE/NVGRE offload, flow mirroring, hierarchical QoS, L4 connection tracking |

| Power & Environmental | Refer to user manual (typical ~15W-25W depending on configuration), operating temp: 0°C to 55°C (not publicly specified, confirm per SKU) |

| Part Number Example | Ports / Speed | Form Factor | Typical Use |

|---|---|---|---|

| MCX75310AAS-HEAT | NDR 400Gb/s dual-port, PCIe 5.0 x16 | PCIe HHHL / FHHL | Top‑of‑rack AI & HPC, GPU clusters |

| MCX75340AAS-HEAT (example) | 4‑port NDR200 / 200GbE | OCP 3.0 | Hyperscale & multi-host |

| Other ConnectX-7 SKUs | Single/dual port, 100G/200G/400G | OCP, FHHL | Enterprise, storage appliances |

For assistance with choosing correct SKU (cable support, bracket type, tall/short bracket) contact Starsurge team.

- ✅ Genuine & certified parts – full warranty, secure supply chain.

- ✅ Pre‑sales architecture consulting – HPC / AI cluster design, RDMA tuning.

- ✅ Global logistics & fast fulfillment – stocks in Asia/Europe/Americas.

- ✅ Custom firmware & OEM options – request bulk configuration.

- ✅ Post‑sales technical support – driver integration, firmware updates, RMA.

Hong Kong Starsurge Group provides full lifecycle support: from compatibility validation to deployment and ongoing maintenance. Our engineers assist with:

- InfiniBand fabric design and performance optimization.

- RoCE configuration for Ethernet environments.

- Integration with GPUDirect Storage and NVMe‑oF targets.

- 3-year standard warranty plus extended coverage available.

Volume pricing, bulk shipping, and customization (brackets, pull-tabs) are available upon request.

NDR includes advanced in-network computing features like SHARP, adaptive routing, and hardware reliable transport natively; Ethernet mode uses RoCE for RDMA with the same line rate. ConnectX-7 supports both via dual personality.

Yes, the adapter is backward compatible with PCIe Gen4 and Gen3, but at reduced lane speed. Best performance is achieved on PCIe Gen5 slots.

Yes, ConnectX-7 supports NVIDIA Multi-Host technology, allowing up to 4 independent hosts to share the same adapter (specific SKU and cabling dependent). Please confirm with ordering guide.

IPsec (AES-GCM 128/256-bit), TLS (AES-GCM 128/256-bit), and MACsec (AES-GCM 128/256-bit). All offloaded from CPU.

Absolutely. ConnectX-7 offloads NVMe over TCP as well as NVMe‑oF, reducing host CPU overhead for storage.

- Ensure proper airflow in server chassis when using high-power transceivers (active optical or DAC).

- Use firmware provided by NVIDIA or validated by Starsurge for security fixes.

- Install the latest driver (MLNX_OFED or native inbox) to enable full hardware offloads.

- Verify QSFP-DD or OSFP compatibility for 400G optics; refer to NVIDIA ethernet transceiver guide.

- Anti-static handling required – the adapter bears sensitive PCIe components.

Hong Kong Starsurge Group Co., Limited is a technology-driven provider of network hardware, IT services, and system integration solutions. Founded in 2008, the company serves customers worldwide with products including network switches, NICs, wireless access points, controllers, cables, and related equipment. Backed by an experienced sales and technical team, Starsurge supports industries such as government, healthcare, manufacturing, education, finance, and enterprise. The company also offers IoT solutions, network management systems, custom software development, multilingual support, and global delivery. With a customer-first approach, Starsurge focuses on reliable quality, responsive service, and tailored solutions that help clients build efficient, scalable, and dependable network infrastructure.

✓ Authorized partner for leading brands including NVIDIA networking products.

| Max Throughput | 400 Gb/s (NDR / 400GbE) | PCIe Gen5 | Up to 32 lanes |

|---|---|---|---|

| RDMA Support | InfiniBand & RoCE | Security | IPsec/TLS/MACsec inline |

| GPUDirect | RDMA + Storage | Timing Accuracy | 12ns PTP |

| Component | Recommended / Supported | Remarks |

|---|---|---|

| GPU Servers (HGX/DGX) | Fully compatible with GPUDirect | SHARP offload on InfiniBand |

| Top-of-Rack Switches | NVIDIA QM9700 (NDR), SN4000 (Ethernet) | Supported |

| Virtualization hosts | VMware ESXi 7.0/8.0, KVM, Hyper-V | SR-IOV enabled |

| Storage Systems | PureStorage, VAST Data, WEKA, Dell PowerScale | NVMe-oF / RoCE ready |

| Cables / Optics | NVIDIA passive copper DAC, active optical (400G SR4/DR4) | Confirm compatibility matrix |

- Confirm port configuration (2-port NDR or split 2x200G) matches your switch plan.

- Confirm PCIe slot physical length and bracket type (HHHL / FHHL).

- Check airflow direction and passive/active cable requirements.

- Ensure OS driver support (MLNX_OFED version for your kernel).

- For security features: enable secure boot & inline crypto in firmware.

- Review warranty and advance replacement options.

64-port 400G InfiniBand, SHARPv3 integration.

Cost‑effective 200Gb/s for smaller clusters.

Storage & security acceleration + programmable data path.

Compliant OSFP to 2xOSFP or 2xQSFP56.