NVIDIA mellanox ConnectX-6 MCX653106A-HDAT Dual-Port 200Gb/s InfiniBand Smart Adapter –PCIe 4.0 x16, In-Network Computing

Product Details:

| Brand Name: | Mellanox |

| Model Number: | MCX653106A-HDAT-SP |

| Document: | connectx-6-infiniband.pdf |

Payment & Shipping Terms:

| Minimum Order Quantity: | 1pcs |

|---|---|

| Price: | Negotiate |

| Packaging Details: | outer box |

| Delivery Time: | Based on inventory |

| Payment Terms: | T/T |

| Supply Ability: | Supply by project/batch |

|

Detail Information |

|||

| Products Status: | Stock | Application: | Server |

|---|---|---|---|

| Condition: | New And Original | Type: | Wired |

| Max Speed: | Up To 200 Gb/s | Ethernet Connector: | QSFP56 |

| Model: | MCX653106A-HDAT | Name: | MCX653106A-HDAT-SP Mellanox Network Card 200gbe High Speed Smart Safe |

| Highlight: | Mellanox ConnectX-6 network adapter,200Gb/s InfiniBand smart adapter,PCIe 4.0 x16 network card |

||

Product Description

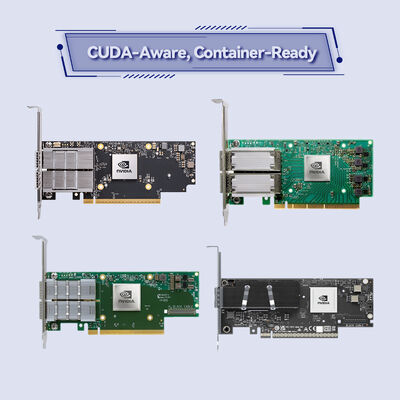

NVIDIA ConnectX-6 MCX653106A-HDAT 200Gb/s Dual-Port InfiniBand Smart Adapter

Industry-leading 200Gb/s InfiniBand and Ethernet smart adapter card with dual QSFP56 ports—delivering in-network computing acceleration, block-level encryption, and PCIe 4.0 x16 host interface for HPC, AI, and cloud data centers.

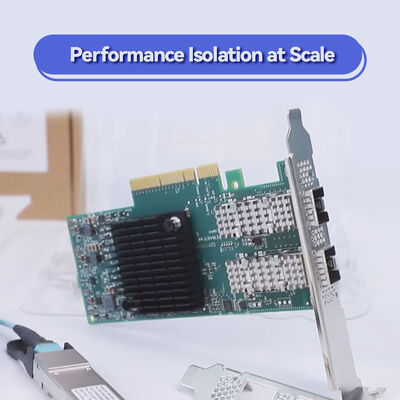

- Dual-port 200Gb/s InfiniBand (HDR) or 200/100/50/40/25/10GbE connectivity

- PCIe Gen 4.0 x16 (also compatible with Gen 3.0) | Up to 215 million messages per second

- Hardware offloads: NVMe-oF target/initiator, XTS-AES 256/512-bit encryption, MPI tag matching

- NVIDIA In-Network Computing and GPUDirect RDMA support

- Low-profile PCIe stand-up form factor, RoHS compliant

Characteristics

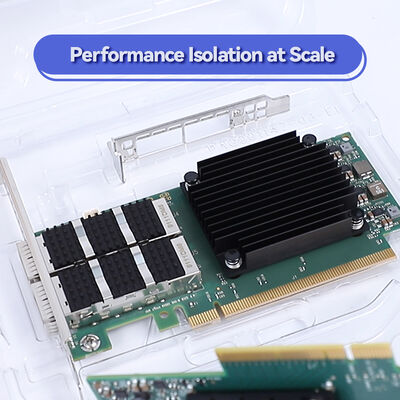

- 200Gb/s Throughput: Dual ports operating at up to 200Gb/s InfiniBand (HDR) or Ethernet with full bidirectional bandwidth.

- In-Network Computing: Offloads collective operations (MPI, NCCL, SHMEM) using NVIDIA SHARP technology.

- Block-Level Encryption: Hardware AES-XTS 256/512-bit encryption/decryption without CPU overhead; FIPS compliant.

- NVMe-oF Offloads: Target and initiator offloads for NVMe over Fabrics, reducing CPU utilization.

- Advanced Virtualization: SR-IOV up to 1K VFs, ASAP² acceleration for OVS and virtual switching.

Technology & Standards

The MCX653106A-HDAT integrates NVIDIA In-Network Computing engines (SHARP), RDMA (IBTA 1.3), RoCE, and NVMe-oF. It supports PCIe Gen 4.0 (32 lanes as 2x16), PAM4 and NRZ SerDes, and advanced features like Dynamically Connected Transport (DCT), On-Demand Paging (ODP), and Adaptive Routing. Overlay offloads for VXLAN, NVGRE, Geneve are hardware-accelerated. Compliant with IEEE 802.3bj, 802.3bm, 802.3by, and InfiniBand Trade Association specifications.

Working Principle: Smart Offload Architecture

ConnectX-6 offloads communication and storage tasks from the host CPU to the adapter hardware. For MPI collectives, the adapter processes data in transit using SHARP, reducing endpoint traffic. For storage, NVMe-oF commands are processed directly on the adapter, freeing CPU cores. Block encryption/decryption occurs inline at wire speed. The result is lower latency, higher message rate (215 Mpps), and improved application scalability.

Applications & Deployment

- HPC Clusters: MPI-based simulations requiring low latency and high message rate.

- AI Training: NVIDIA GPU clusters with GPUDirect RDMA and NCCL collectives.

- NVMe-oF Storage: Target/initiator offload for high-performance NVMe storage access.

- Virtualized Data Centers: SR-IOV and ASAP² for OVS offload in NFV and cloud.

- Multi-Socket Servers: Socket Direct configurations to bypass QPI/UPI bottlenecks.

Technical Specifications & Ordering Options

| Model | Ports & Speed | Host Interface | Form Factor | Encryption | Protocols | OPN |

|---|---|---|---|---|---|---|

| ConnectX-6 | 2x QSFP56 (200Gb/s IB/Eth) | PCIe 4.0 x16 (Gen 3 compatible) | PCIe stand-up (low-profile) | AES-XTS 256/512-bit | InfiniBand, Ethernet, NVMe-oF | MCX653106A-HDAT |

| ConnectX-6 | 1x QSFP56 (200Gb/s) | PCIe 4.0 x16 | PCIe stand-up | AES-XTS | IB/Eth | MCX653105A-HDAT |

| ConnectX-6 | 2x QSFP56 (200Gb/s) | PCIe 4.0 x16 | OCP 3.0 | AES-XTS | IB/Eth | MCX653436A-HDAT |

Note: MCX653106A-HAT supports 200Gb/s InfiniBand (HDR) and 200/100/50/25/10GbE. Dimensions: 167.65mm x 68.90mm (without bracket). Includes tall bracket (short bracket accessory). Power consumption < 15W typical.

Advantages & Differentiators

- vs. Previous Gen (ConnectX-5): Double the bandwidth (200Gb/s vs. 100Gb/s), integrated SHARP for in-network computing, and block-level encryption.

- vs. Competitor NICs: True hardware offload for NVMe-oF and MPI collectives—not just stateless offloads.

- Socket Direct Option: Available for multi-socket servers to eliminate UPI/QPI bottlenecks.

- FIPS Compliance: Hardware encryption meets government security standards.

Service & Support

We offer 24/7 technical consultation, RMA services, and integration support for ConnectX-6 adapters. Each card is backed by a 1-year warranty (extendable). Our team provides driver validation for major Linux distributions, Windows, and VMware. Pre-sales configuration assistance for InfiniBand fabric design is available.

Frequently Asked Questions (FAQ)

Q: Is the MCX653106A-HDAT compatible with 200Gb/s Quantum switches?

A: Yes, it is fully interoperable with NVIDIA Quantum QM8700/QM8790 switches and Quantum-2 switches when using HDR or NDR mode with appropriate cables.

Q: Can this adapter be used for Ethernet as well as InfiniBand?

A: Yes, it supports both InfiniBand and Ethernet protocols. The firmware auto-detects the switch type and configures the appropriate mode.

Q: Does it support RoCE (RDMA over Converged Ethernet)?

A: Yes, ConnectX-6 fully supports RoCE, providing low-latency RDMA in Ethernet environments.

Q: What is the maximum message rate?

A: The adapter delivers up to 215 million messages per second, ideal for small-packet HPC workloads.

Q: Is the card compatible with PCIe Gen 3.0 slots?

A: Yes, it is backward compatible with PCIe Gen 3.0 (x16), though bandwidth will be limited to ~100Gb/s per port due to Gen 3 limits.

Precautions & Compatibility Notes

- PCIe Slot Requirement: For full 200Gb/s performance, install in a PCIe Gen 4.0 x16 slot. Gen 3.0 slots will limit throughput.

- Cooling: Ensure adequate airflow in server chassis; passive cooling requires minimum 200 LFM.

- Cabling: Use QSFP56 passive/active copper or optical modules rated for 200Gb/s (HDR).

- Driver Support: Use latest NVIDIA MLNX_OFED for Linux or WinOF-2 for Windows.

- Operating Temperature: 0°C to 70°C; store between -40°C and 85°C.

Company Introduction

With over a decade of experience, we operate a large-scale factory backed by a strong technical team. Our extensive customer base and domain expertise enable us to offer competitive pricing without compromising on quality. As authorized distributors for Mellanox, Ruckus, Aruba, and Extreme, we stock original network switches, network card (nic card) solutions, wireless Access Points, controllers, and cabling. We maintain a 10 million USD inventory to ensure rapid fulfillment across diverse product lines. Every shipment is verified for accuracy, and we provide 24/7 consultation and technical support. Our professional sales and technical teams have earned a high reputation in global markets—partner with us for reliable infrastructure solutions.