NVIDIA ConnectX-8 SuperNIC C8180(900-9X81E-00EX-ST0) 800G AI Networking Adapter

Product Details:

| Brand Name: | Mellanox |

| Model Number: | C8180(900-9X81E-00EX-ST0) |

| Document: | connectx-datasheet-connectx...1).pdf |

Payment & Shipping Terms:

| Minimum Order Quantity: | 1pcs |

|---|---|

| Price: | Negotiate |

| Packaging Details: | outer box |

| Delivery Time: | Based on inventory |

| Payment Terms: | T/T |

| Supply Ability: | Supply by project/batch |

|

Detail Information |

|||

| Model NO.: | C8180(900-9X81E-00EX-ST0) | Transmission Rate: | 400gbe |

|---|---|---|---|

| Ports: | Dual-Port | Function: | Lacp, Stackable, Vlan Support |

| Technology: | Infiniband | Host Interface: | Gen6 X16 |

| Interface Type: | Qsfp112 | Trademark: | Mellanox |

Product Description

Optimized for hyperscale AI workloads, the ConnectX-8 SuperNIC delivers up to 800Gb/s bi-directional bandwidth, PCIe Gen6 host interface, and advanced telemetry-based congestion control. Built for both InfiniBand and Ethernet fabrics, it powers trillion-parameter GPU computing and agentic AI applications with unprecedented efficiency.

The NVIDIA ConnectX-8 SuperNIC (C8180) represents a generational leap in AI fabric acceleration. Supporting up to 800 gigabits per second (Gb/s) over InfiniBand or Ethernet, this adapter eliminates network bottlenecks in large-scale GPU clusters. With native PCIe Gen6 (up to 48 lanes) and advanced features such as NVIDIA GPUDirect RDMA, SHARP in-network computing, and programmable congestion control, the ConnectX-8 ensures maximum throughput and lowest latency for training, inference, and data-intensive HPC workloads. Its power-efficient design aligns with sustainable AI data center goals while enabling scaling beyond hundreds of thousands of GPUs.

- 800Gb/s total bandwidth – supports 800/400/200/100 Gb/s InfiniBand speeds and 400/200/100/50/25 Gb/s Ethernet.

- PCIe Gen6 host interface – up to 48 lanes, low overhead, and Multi-Host support for up to four hosts.

- In-Network Computing – SHARPv3 for collective operations, MPI accelerations, rendezvous protocol offloads.

- GPUDirect RDMA & Storage – direct GPU memory access and GPUDirect Storage for zero-copy I/O.

- Advanced congestion control & telemetry – real-time flow optimization for AI tail latency.

- Hardware security – secure boot, flash encryption, device attestation (SPDM 1.1), inline crypto (IPsec/MACsec/PSP).

- RDMA & RoCEv2 acceleration with programmable congestion control.

- Ethernet Accelerated Switching and Packet Processing (ASAP²) for SDN/OVS offload.

- Overlay network acceleration: VXLAN, GENEVE, NVGRE.

- Stateless TCP offloads (LSO, LRO, GRO, TSS, RSS).

- Precision Time Protocol (PTP) IEEE 1588v2 Class C, SyncE, PTM, time-triggered scheduling.

- Burst buffer offloads, high-speed packet reordering.

The ConnectX-8 SuperNIC C8180 is purpose-built for next-generation AI fabrics and hyperscale cloud environments:

- AI Factories & Large Language Model Clusters – trillion-parameter model training with 800G front-end and back-end networks.

- High-Performance Computing (HPC) – SHARPv3 in-network reduction accelerates MPI collectives for scientific simulations.

- GPU-Accelerated Cloud Data Centers – multi-tenant isolation, overlay offloads, and advanced QoS.

- Enterprise AI Infrastructure – from inference farms to AI data platforms requiring deterministic low latency.

- Storage & Converged Fabrics – GPUDirect Storage and RoCEv2 for NVMe-oF and distributed file systems.

Seamless integration with NVIDIA networking platforms and major server OEMs. Validated software stacks include:

- NVIDIA NCCL, HPC-X, DOCA UCC/UCCX

- Open MPI, MVAPICH2

- Linux distributions (RHEL, Ubuntu, SLES)

- Windows Server with RDMA support

- DPDK & VPP for telco/NFV

- NVIDIA DGX / HGX systems

- PCIe Gen6 enabled servers (x86 / Arm / GPU-accelerated nodes)

- Industry standard OCP 3.0 TSFF and Mezzanine designs

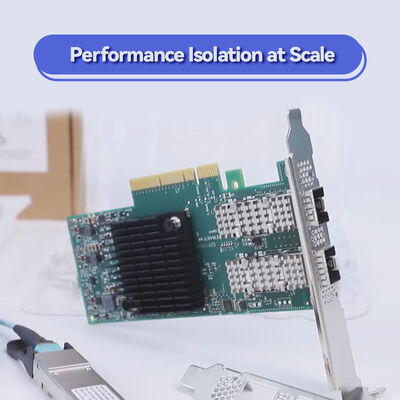

- Compatible with 800G OSFP and 400G QSFP112 optics

| Parameter | Details |

|---|---|

| Product Model | C8180 (900-9X81E-00EX-ST0) |

| Maximum Bandwidth | 800 Gb/s |

| InfiniBand Speeds | 800 / 400 / 200 / 100 Gb/s |

| Ethernet Speeds | 400 / 200 / 100 / 50 / 25 Gb/s |

| Host Interface | PCIe Gen6 (up to 48 lanes), Multi-Host capable (up to 4 hosts) |

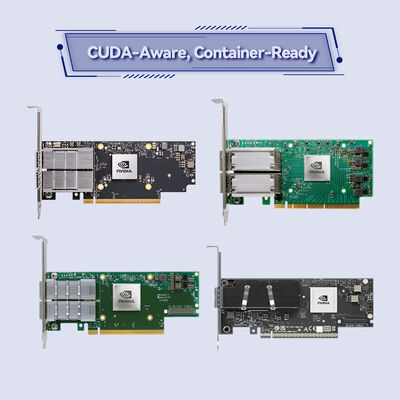

| Form Factors | PCIe HHHL 1P x OSFP, PCIe HHHL 2P x QSFP112, Dual ConnectX-8 Mezzanine, OCP 3.0 TSFF 1P x OSFP |

| Port Configuration | 1x 800G OSFP or 2x 400G / split up to 8 logical ports |

| RDMA Support | RoCEv2, IBTA v1.7 compliant |

| MTU | 256 to 4096 bytes, 1GB messages |

| Security Features | Secure boot (hardware root of trust), flash encryption, SPDM 1.1, Inline IPsec/MACsec/PSP |

| Timing & Sync | PTP IEEE 1588v2 Class C, SyncE G.8262.1, PTM, PPS in/out |

| Management | NC-SI, MCTP over SMBus/PCIe PLDM (DSP0248/0267/0218), SPI flash, JTAG |

| Network Boot | InfiniBand / Ethernet PXE, iSCSI, UEFI |

| SKU / Option | Port / Speed | Form Factor | Typical Use Case |

|---|---|---|---|

| C8180 – 900-9X81E-00EX-ST0 | 1x OSFP 800G (or split to 2x400G / 8x100G) | PCIe HHHL | AI training nodes, high-density GPU servers |

| Dual ConnectX-8 Mezzanine | 2x 400G QSFP112 | Proprietary mezzanine | NVIDIA HGX / OEM integrated systems |

| OCP 3.0 TSFF 1P | 1x OSFP 800G | OCP 3.0 SFF | Cloud-optimized OCP platforms |

| PCIe HHHL 2P | 2x 400G QSFP112 | PCIe HHHL | Dual-port high availability or multi-fabric |

800Gb/s line rate and advanced congestion management eliminate performance variability in multi-tenant AI clouds. Combined with SHARP in-network computing, collective operation time reduces drastically.

PCIe Gen6 and support for both InfiniBand and Ethernet ensure investment protection for next-gen GPU architectures. Power-efficient design lowers TCO at scale.

Hardware root of trust, secure firmware updates, and telemetry-based flow control give operators confidence in production AI factories.

Natively integrated with NCCL, DOCA, and GPUDirect technologies. Reduce time-to-solution for AI researchers and data scientists.

As an authorized channel partner, Starsurge provides global logistics, technical pre-sales consulting, and post-sales support for NVIDIA ConnectX-8 SuperNIC. Our services include:

- Integration testing with your server/storage environment.

- Firmware management and compatibility validation.

- RMA and advance replacement options.

- Custom cabling and transceiver bundling (OSFP, QSFP112, breakout cables).

- Multilingual technical support (English, Chinese, and more).

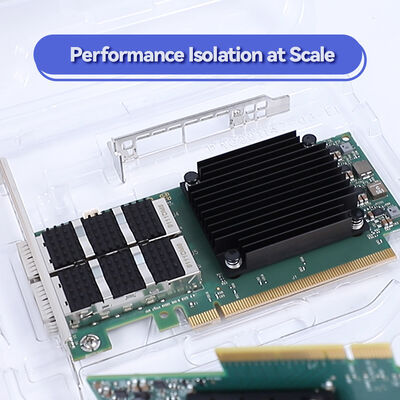

- Ensure adequate airflow and cooling in high-density server chassis when using 800G optics (power dissipation ~25-30W typical).

- Always use certified OSFP or QSFP112 optical/copper modules from NVIDIA or validated partners to guarantee signal integrity.

- Firmware updates must follow NVIDIA release notes; unsupported firmware versions may cause performance degradation.

- Multi-host configuration requires specific motherboard PCIe slot bifurcation support – please verify with your server vendor.

- Some advanced features (e.g., SHARPv3, PTP Class C) require corresponding switch infrastructure (NVIDIA Quantum-3 or Spectrum-5 families).

Hong Kong Starsurge Group Co., Limited is a technology-driven provider of network hardware, IT services, and system integration solutions. Founded in 2008, the company serves customers worldwide with products including network switches, NICs, wireless access points, controllers, cables, and related networking equipment. Backed by an experienced sales and technical team, Starsurge supports industries such as government, healthcare, manufacturing, education, finance, and enterprise. The company also offers IoT solutions, network management systems, custom software development, multilingual support, and global delivery. With a customer-first approach, Starsurge focuses on reliable quality, responsive service, and tailored solutions that help clients build efficient, scalable, and dependable network infrastructure.

For NVIDIA ConnectX-8 SuperNIC C8180 pricing, samples, or integration advice, please contact our networking specialists.

| Component | Recommended / Validated Models |

|---|---|

| Switch Platforms | NVIDIA Quantum-3 InfiniBand, Spectrum-5 Ethernet (800G capable) |

| Optical Transceivers | NVIDIA OSFP 800G DR8 / 2xFR4, QSFP112 400G SR4/DR4 |

| GPU Servers | NVIDIA DGX H100/H200, Supermicro GPU X13, PowerEdge XE9680, HPE Cray XD |

| Operating Systems | Ubuntu 22.04/24.04, RHEL 9.x, Rocky Linux 9, Windows Server 2025 (RDMA) |

- ☐ Confirm PCIe slot type (PCIe Gen6 x16 or x32? For full 800G host bandwidth, at least PCIe 6.0 x16 recommended)

- ☐ Verify thermal clearance and airflow direction in your chassis (passive heatsink or active fan required?)

- ☐ Choose correct form factor: HHHL / OCP 3.0 / Mezzanine for your server.

- ☐ Select compatible optics/cables: OSFP 800G or QSFP112 2x400G depending on port variant.

- ☐ Ensure target switch supports 800G speed and required protocols (InfiniBand NDR or Ethernet 800G).

- ☐ Check driver & firmware support: MLNX_OFED or NVIDIA DOCA version for your OS.

64-port 800G InfiniBand switch for GPU clusters.

51.2 Tbps, 800G AI optimized Ethernet fabric.

Offloads storage & security for AI cloud data centers.

Short-reach copper and active optical cables for SuperNIC interconnection.

- NVIDIA Networking Performance Tuning Guide for AI Fabrics

- ConnectX-8 Adapter Cards Installation Manual (available upon request)

- RoCEv2 Congestion Control Best Practices – White Paper

- Understanding SHARPv3 In-Network Reduction for LLMs