NVIDIA ConnectX-7 MCX75310AAS-NEAT NDR 400Gb/s InfiniBand Adapter

Product Details:

| Brand Name: | Mellanox |

| Model Number: | MCX75310AAS-NEAT(900-9X766-003N-SQ0) |

| Document: | Connectx-7 infiniband.pdf |

Payment & Shipping Terms:

| Minimum Order Quantity: | 1pcs |

|---|---|

| Price: | Negotiate |

| Packaging Details: | outer box |

| Delivery Time: | Based on inventory |

| Payment Terms: | T/T |

| Supply Ability: | Supply by project/batch |

|

Detail Information |

|||

| Model NO.: | MCX75310AAS-NEAT(900-9X766-003N-SQ0) | Ports: | Single-Port |

|---|---|---|---|

| Technology: | Infiniband | Interface Type: | Osfp56 |

| Specification: | 16.7cm X 6.9cm | Origin: | India / Israel / China |

| Transmission Rate: | 400gbe | Host Interface: | Gen3 X16 |

| Highlight: | NVIDIA ConnectX-7 InfiniBand adapter,Mellanox 400Gb/s network card,NDR InfiniBand adapter with warranty |

||

Product Description

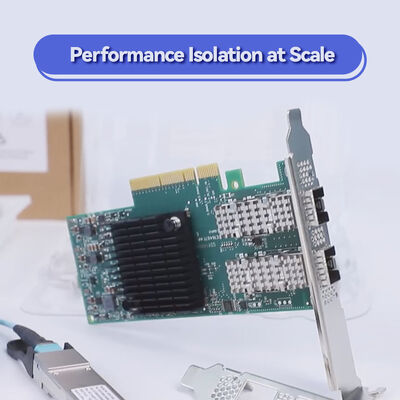

The NVIDIA ConnectX-7 MCX75310AAS-NEAT is a flagship NDR 400Gb/s InfiniBand Smart Adapter featuring PCIe Gen5 x16 interface for both InfiniBand and RoCE. Engineered for AI factories, HPC clusters, and hyperscale cloud data centers, this ultra-low latency adapter integrates in-network computing, hardware security offloads, and advanced virtualization to accelerate modern scientific computing and software-defined infrastructures.

The NVIDIA ConnectX-7 family delivers groundbreaking performance with up to 400Gb/s bandwidth per port, supporting both InfiniBand (NDR/HDR/EDR) and Ethernet (up to 400GbE). Model MCX75310AAS-NEAT features PCIe Gen5 host interface (up to x32 lanes), multi-host capability, and advanced engines for GPUDirect RDMA, NVMe-oF acceleration, and inline cryptography. Built for demanding AI training, simulation, and real-time analytics, this adapter reduces CPU overhead while maximizing data throughput and security.

With on-board memory for rendezvous offload, SHARP collective acceleration, and ASAP2 SDN offloads, ConnectX-7 transforms standard servers into high-performance network nodes with near-zero jitter and nanosecond-precision timing (IEEE 1588v2 Class C).

- NDR InfiniBand & 400GbE ready - Up to 400Gb/s total bandwidth, supports NDR, HDR, EDR InfiniBand and 400/200/100/50/25/10GbE

- PCIe Gen5 x16 (up to x32 lanes) - High-throughput host interface with TLP processing hints, ATS, PASID, and SR-IOV

- In-Network Computing - Hardware offload of collective operations (SHARP), rendezvous protocol, burst buffer offload

- GPUDirect RDMA & GPUDirect Storage - Direct GPU-to-NIC data path, accelerating deep learning and data analytics

- Hardware Security Engines - Inline IPsec/TLS/MACsec encryption/decryption (AES-GCM 128/256-bit) + secure boot with hardware root-of-trust

- Advanced Storage Acceleration - NVMe-oF (over Fabrics/TCP), NVMe/TCP offload, T10-DIF signature handover, iSER, NFS over RDMA

- ASAP2 SDN & VirtIO Acceleration - OVS offload, VXLAN/GENEVE/NVGRE encapsulation, connection tracking, and programmable parser

- Precision Timing - PTP (IEEE 1588v2) with 12ns accuracy, SyncE, time-triggered scheduling, packet pacing

Built on 7nm process, ConnectX-7 integrates multiple hardware acceleration engines that offload the CPU and deliver deterministic performance. Key technological pillars include:

- RDMA over Converged Ethernet (RoCE) - Zero-Touch RoCE for low-latency Ethernet fabrics

- Dynamically Connected Transport (DCT) & XRC - Efficient MPI and HPC communication

- On-Demand Paging (ODP) & User Memory Registration (UMR) - Simplifies memory management for large-scale applications

- Scalable Hierarchical Aggregation and Reduction Protocol (SHARP) - In-network data reduction for MPI collectives

- Multi-Host Technology - Enables up to 4 independent hosts to share a single adapter, optimizing server utilization

- PLDM & SPDM Manageability - Firmware update, monitoring, and device attestation for enterprise security

- AI & Machine Learning Clusters - Large-scale training with NCCL, UCX, and GPUDirect RDMA

- HPC Simulation & Research - Weather modeling, genomics, molecular dynamics requiring low-latency MPI

- Hyperscale Cloud & SDDC - Overlay networking, NFV acceleration, secure multi-tenancy (SR-IOV)

- Enterprise Storage Systems - NVMe-oF target offload and distributed file systems (Lustre, GPUDirect Storage)

- 5G Edge & Telecom - Time-sensitive infrastructures with Class C PTP and MACsec security

Operating Systems & Virtualization: In-box drivers for Linux (RHEL, Ubuntu, Rocky Linux), Windows Server, VMware ESXi (SR-IOV), and Kubernetes (CNI plugins). Optimized for NVIDIA HPC-X, UCX, OpenMPI, MVAPICH, MPICH, OpenSHMEM, NCCL, and UCC.

Hardware Compatibility: Standard PCIe Gen5 slots (x16 mechanical, x16/x32 electrical). Certified with major server platforms from HPE, Supermicro, and NVIDIA DGX systems.

Interoperability: Fully compliant with InfiniBand Trade Association Spec 1.5, IEEE 802.3 for Ethernet, and PCI-SIG Gen5 specifications.

| Parameter | Detail |

|---|---|

| Product Model | MCX75310AAS-NEAT |

| Form Factor | PCIe HHHL (Half Height Half Length), FHHL bracket included |

| Host Interface | PCIe Gen5.0 x16 (up to 32 lanes, supporting bifurcation & Multi-Host) |

| Network Protocols | InfiniBand (NDR/HDR/EDR) & Ethernet (400GbE, 200GbE, 100GbE, 50GbE, 25GbE, 10GbE) |

| Port Configuration | Single-port QSFP-DD (supports 1x 400G NDR or 2x 200G split; please confirm port density per SKU) |

| InfiniBand Speeds | NDR 400Gb/s, HDR 200Gb/s, EDR 100Gb/s, FDR (compatible) |

| Ethernet Speeds | 400/200/100/50/25/10GbE NRZ/PAM4 |

| On-board Memory | Integrated in-network memory for rendezvous offload & burst buffer |

| Security Offloads | Inline IPsec, TLS, MACsec (AES-GCM 128/256-bit), Secure Boot, Flash Encryption |

| Storage Offloads | NVMe-oF (TCP/Fabrics), NVMe/TCP, T10-DIF, SRP, iSER, NFS over RDMA, SMB Direct |

| Timing & Sync | IEEE 1588v2 PTP (12ns accuracy), SyncE, programmable PPS, time-triggered scheduling |

| Virtualization | SR-IOV, VirtIO acceleration, VXLAN/NVGRE/GENEVE offload, Connection tracking (L4 firewall) |

| Manageability | NC-SI, MCTP over SMBus/PCIe, PLDM (Monitor/Firmware/FRU/Redfish), SPDM, SPI flash, JTAG |

| Remote Boot | InfiniBand boot, iSCSI, UEFI, PXE |

| Power Consumption | Not publicly specified - typical high-performance adapters require adequate airflow; please confirm before ordering |

| Operating Temperature | 0°C to 55°C (with appropriate chassis cooling) |

| Model (Partial) | Port/Speed | Host Interface | Form Factor | Use Case |

|---|---|---|---|---|

| MCX75310AAS-NEAT | 1x NDR 400Gb/s InfiniBand/Ethernet | PCIe Gen5 x16 | HHHL | AI, HPC, Cloud - flagship with Multi-Host & secure boot |

| MCX75310AAS-NCAT | 1x NDR 400Gb/s | PCIe Gen5 x16 | HHHL | Similar feature set with crypto enabled |

| MCX75510AAS-NEAT | 2x NDR 200Gb/s or 1x 400Gb/s | PCIe Gen5 x16 | FHHL | Dual-port for redundancy |

| OCP 3.0 variant | Up to 2x 200Gb/s | PCIe Gen5 x16 | OCP 3.0 SFF | Hyperscale OCP platforms |

For exact ordering codes and custom configurations, please refer to the official ConnectX-7 PCIe Adapter Manual (docs.nvidia.com) or contact Hong Kong Starsurge Group.

Lowest Total Cost of Ownership - Offloads CPU from networking, storage, and security tasks -- lowering power and cooling costs per Gb/s.

Future-Ready Bandwidth - PCIe Gen5 and NDR 400G support eliminates bottlenecks for next-gen GPU servers and AI clusters.

Enterprise Security - Hardware inline encryption (IPsec/TLS/MACsec) and secure chain of trust meet compliance (FIPS, DoD).

Seamless Integration - Full compatibility with major distros, hypervisors, and container orchestration platforms.

Hong Kong Starsurge Group provides end-to-end lifecycle support: pre-sales architectural guidance, compatibility validation, warranty management, and RMA services. Our technical team assists with driver tuning, firmware upgrades, and cluster integration. Standard warranty: 3-year limited hardware support with optional extended coverage. Global shipping and multilingual technical assistance are available.

- Ensure server motherboard provides PCIe Gen5 slot with adequate cooling (active airflow recommended for 400G operation)

- For Multi-Host configurations, verify platform support and cabling requirements (splitter cables may be needed)

- Optical modules / DAC cables are sold separately; use NVIDIA-qualified transceivers for compliance

- Maximum power consumption not published by NVIDIA; typical thermal design assumes 25W-30W range under heavy load, please validate with your chassis thermal guide

- For cryptographic features (IPsec/TLS offload), additional licensing may be required; please confirm with sales

Founded in 2008, Starsurge is a technology-driven provider of network hardware, IT services, and system integration. We serve global clients with enterprise-grade NICs, switches, wireless solutions, and customized software development. Backed by experienced sales and technical teams, Starsurge supports government, healthcare, finance, manufacturing, education, and enterprise sectors. Our customer-first approach ensures reliable quality, responsive logistics, and tailored infrastructure solutions across the world.

As an authorized partner of leading networking brands, Starsurge offers professional pre-sales consulting, global delivery, and multilingual support to help you build scalable and efficient networks.

- Max Throughput: 400Gb/s per port

- PCIe Gen5: Up to 32 GT/s

- RDMA Support: InfiniBand & RoCE

- Security: Inline IPsec/TLS/MACsec

- NVIDIA SHARP: In-network aggregation

- GPUDirect: RDMA + Storage

| Component / Ecosystem | Supported | Notes |

|---|---|---|

| NVIDIA DGX H100 / GH200 | Yes | Certified |

| VMware vSphere / ESXi | Yes (SR-IOV) | Driver support included |

| Linux kernel 5.x+ | Yes (In-box) | MLNX_OFED recommended |

| Windows Server 2022 | Yes | Native RDMA / RoCE |

| Kubernetes / CNI | Yes | Multus, SR-IOV CNI |

| OpenMPI / MVAPICH | Yes | Optimized for InfiniBand verbs |

- Check host platform: PCIe Gen5 slot (or Gen4 with backward compatibility but bandwidth limited)

- Confirm cable type: 400G NDR (OSFP/QSFP-DD) or splitter for 2x200G

- Verify cooling: High-power adapter requires at least 300 LFM airflow

- For security features: Confirm if secure boot and crypto offload are needed (model NEAT includes hardware root-of-trust)

- Software stack: MLNX_OFED or inbox driver version compatibility with your kernel/OS

- NVIDIA Quantum-2 NDR InfiniBand Switch (Q9700) - 64-port 400G

- NVIDIA BlueField-3 DPU - programmable data center infrastructure

- ConnectX-6 Dx - 200G dual-port adapter for cost-efficient deployments

- LinkX Series Cables - 400G DAC/AOC certified for ConnectX-7

- NVIDIA ConnectX-7 PCIe Adapter User Manual (docs.nvidia.com)

- RDMA over Converged Ethernet (RoCE) Deployment Guide

- GPUDirect Storage Best Practices

- NVIDIA SHARP Technology White Paper